Its Q2 2026 and companies still think AI means one thing: GenAI. Large language models. Chatbots. Content generation.

That’s not AI. That’s one tool in a 50-year-old toolkit.

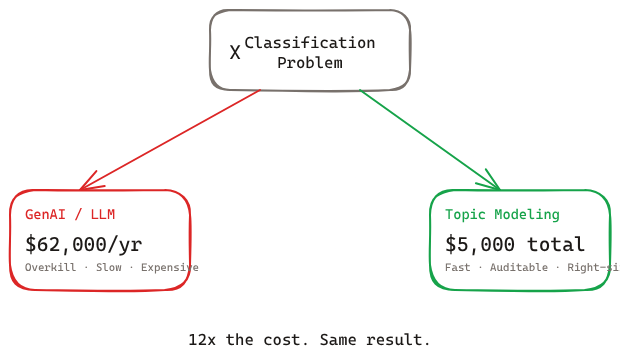

Classification problems have classifiers. Forecasting problems have regression. Anomaly detection has isolation forests. Survival problems have survival models. Each one is cheaper, faster, and more accurate than GenAI for its specific job.

And yet, companies are paying 12x more for GenAI solutions to problems that $5,000 of classical ML handles better. Not to mention the ROI is non existent in earlier one.

That’s the AI Confusion Tax. You’re paying it whether you see the invoice or not.

The Case

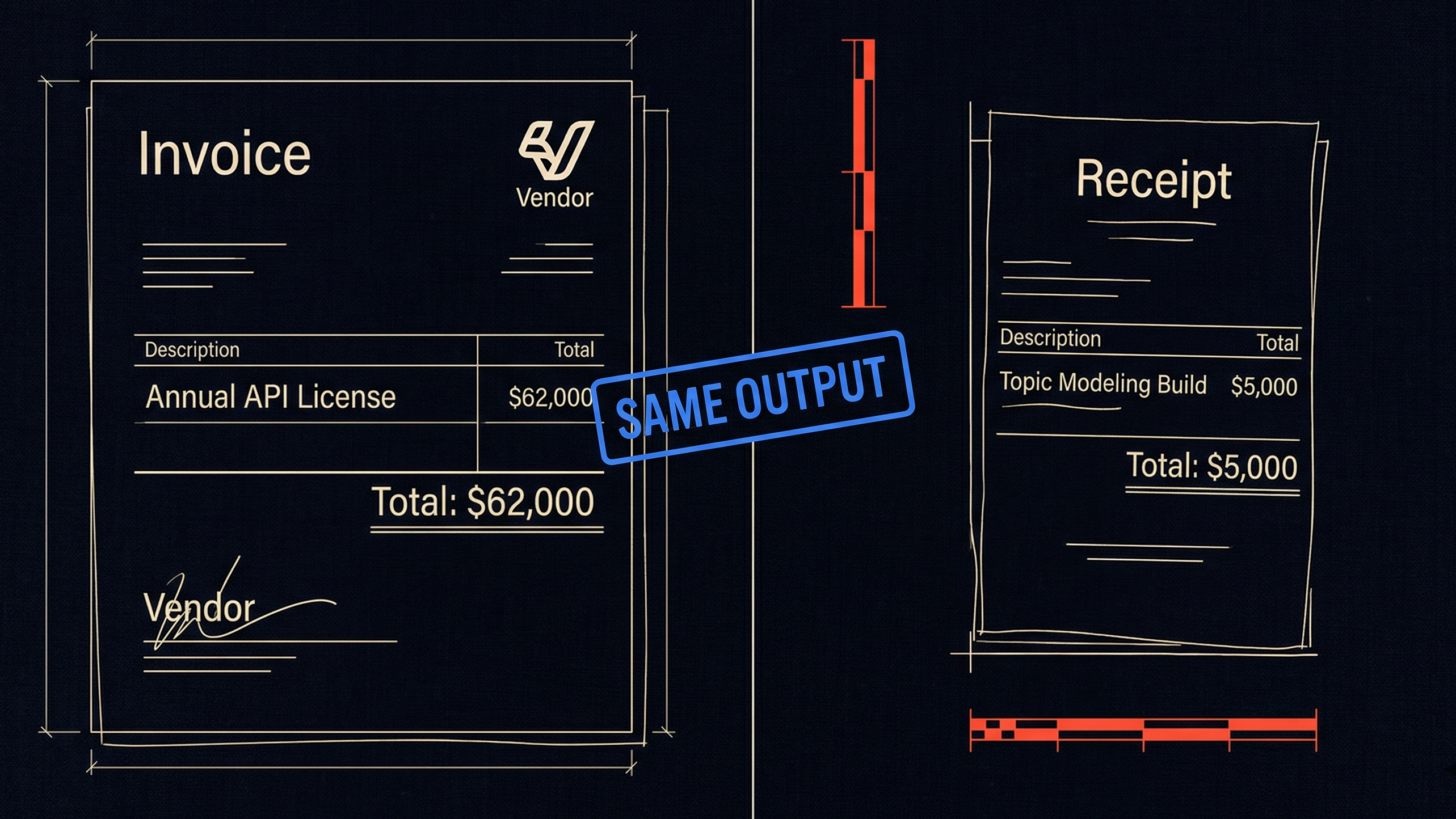

This happened. a vendor quoted $62,000 a year for a supplier categorization problem. Implementation: $50K. Recurring API costs: $1K per month.

Now doing same job with topic modeling would cost: $15–20K to build. $10 a month to run.

They almost signed. They had no idea another way existed. Let that sink in!

Well this isn’t rare. RAND Corporation research puts the AI project failure rate at over 80%, twice the rate of non-AI technology projects. MIT’s 2025 report found 95% of generative AI pilots at enterprises are failing. And S&P Global’s 2025 survey found 42% of companies abandoned most of their AI initiatives this year — up from 17% in 2024.

The numbers keep climbing. Not because AI doesn’t work. Because the wrong AI gets applied to the wrong problem, at the wrong cost, with nobody asking the right questions upfront.

What is the AI Confusion Tax?

The AI Confusion Tax is the hidden cost companies pay when they select an AI solution before defining the problem it needs to solve. It shows up as overspend on the wrong tools, mismatched model types, and months of data preparation that never connects to a business decision.

It’s structural, not malicious. Vendors pitch what they sell. Companies don’t know the alternatives exist. The gap between those two facts is where the tax lives.

Three patterns account for the bulk of it.

1. Wrong AI type for the problem

A glass manufacturer wanted to organize their vendor base — which vendors sell which SKUs, how to consolidate for better negotiating power. A vendor came in with a solution built on LLM APIs. Expensive, slow, hard to explain to a supply chain manager.

The problem was text categorization. Topic modeling — LDA, basic NLP, even keyword extraction — would have solved it at a fraction of the cost. These are solved problems. They’re auditable. They’re cheap.

Recent research backs this up: fine-tuned small language models outperform GPT-4 on 85% of classification tasks while costing 10-100x less per inference. The hidden operational costs of LLMs — token monitoring, latency tracking, security audits — add 20-40% on top of the sticker price.

The vendor sold LLM because they sell LLM. The company bought it because they didn’t know topic modeling existed.

Same result. 12x cost difference. That’s not a technology gap. That’s an information gap — generative AI is one tool in the toolbox, not the toolbox itself.

2. Wrong problem selected

This one costs the most over time.

A food company wanted to predict when their inventory would expire. They hired a team to build a classification model — will this item decay or not?

The model never produced useful outputs. Low confidence, poor predictions, no usable time window.

Nobody asked the right question.

This isn’t a classification problem. It’s a survival analysis problem. You don’t need to know whether the item will decay. You need to know when it will decay — and how much time you have to act before it does.

The difference matters. Classification gives you a yes/no on the day you check. Survival analysis gives you a time window. In inventory management, that window is everything — it’s the difference between selling at full margin and writing off the batch.

The same mistake appears in churn prediction. Teams build classification models — will this customer churn? But customers don’t just switch overnight. They gradually reduce engagement, then spending, then leave. A classification model catches none of that progression. A survival model does.

The team blames the data. The data is fine. The model type was wrong from the start. The root cause is almost never data quality. It’s problem framing.

3. Data before decision

This one is expensive in a different way. It costs you in delay.

Every manufacturing and FMCG company I’ve worked with has started an AI project by running a data audit. Six months of cleaning, structuring, waiting for the right data.

$100K per quarter in lost optimization value.

The question nobody asks early enough: what decision are we trying to improve?

If you don’t know that, you don’t know which data matters. You end up preparing data that has nothing to do with your actual problem. The order matters. Decision first. Data second. Model third.

Vendors don’t push back. They scope the data work. The delay is yours.

The pattern underneath

Vendors pitch what they sell. Companies don’t know the alternatives. Nobody defines the problem before selecting the solution.

Every AI decision — vendor selection, project scoping, budget approval — has this tax embedded in it. Sometimes it’s $57K a year on the wrong tool. Sometimes it’s $100K a quarter in data prep delay. Sometimes it’s a model that works technically and delivers nothing commercially.

The sticker price on an AI vendor quote is only 40-55% of your actual total cost of ownership. The rest is hidden in integration, governance, and waste from solving the wrong problem.

Understanding which AI type fits your problem — whether it’s rules-based automation, analytics, predictive ML, or generative AI — is the single most important question you can answer before signing anything.

Before you sign the next contract

One question before any AI project gets approved: does the person scoping this understand what they’re scoping?

Not — is the model accurate? Not — is the vendor credible?

Can they tell you which AI type this problem needs? Classification, regression, clustering, survival analysis? And can they explain why that type is the right one for this specific business decision?

If they can’t answer that, they’re guessing. And you’re paying for the guess.

The confusion is solvable. You just need to know what questions to ask before anyone writes a contract.

Frequently Asked Questions

What is the AI Confusion Tax?

The AI Confusion Tax is the hidden cost companies pay when they select an AI solution — usually GenAI — before defining the problem it needs to . It shows up as 12x overspend on the wrong tools, mismatched model types, and months of data preparation that never connects to a business decision.

Why do companies pay the AI Confusion Tax?

Vendors pitch what they sell. Companies don’t know the alternatives exist. Every business problem has a specific ML model that’s cheaper, faster, and more accurate than GenAI for that job — but most leaders don’t know these alternatives exist.

How much does the wrong AI cost?

Companies routinely pay 12x more for GenAI solutions to problems that $5,000–$20,000 of classical ML handles better. A vendor quoted $62,000/year for a supplier categorization problem. The same job done with topic modeling: $15–20K to build, $10/month to run.

How do you avoid the AI Confusion Tax?

Before approving any AI project, ask: can the person scoping this tell you which AI type this problem needs — classification, regression, clustering, survival analysis — and why that type is the right one for this specific business decision? If they can’t answer, they’re guessing. And you’re paying for the guess.

If your AI projects are running into walls you can’t explain, the AI Strategy Audit finds the confusion tax in your current initiatives — and tells you exactly what to fix. Or start with the AI Profit Quotient to find out where your company actually sits on the AI spectrum.